Figure 1.2 - DataRes at UCLA.

Figure 1.2 - DataRes at UCLA.

Figure 1.3 - DataRes at UCLA.

Music has been one of the most consumed mediums throughout history, and its methods and habits of consumption are strongly related to changes in technology and culture. The sub-area focus of my lab relates to the changes in genre and song structure over time. My prediction is similar to the popular opinion; (popular) music is a business and to maximize value creators must abide by the demand of its consumers.

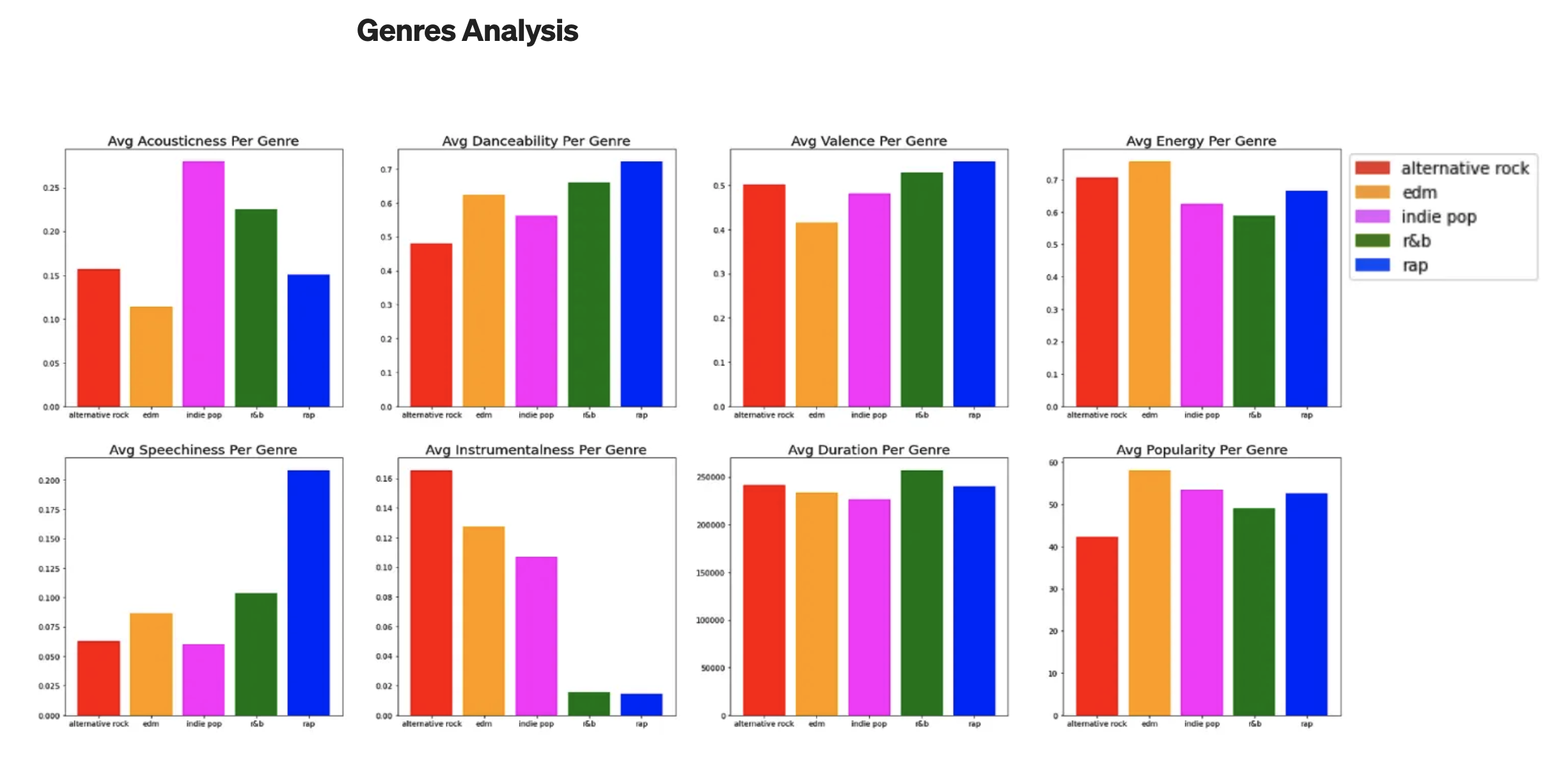

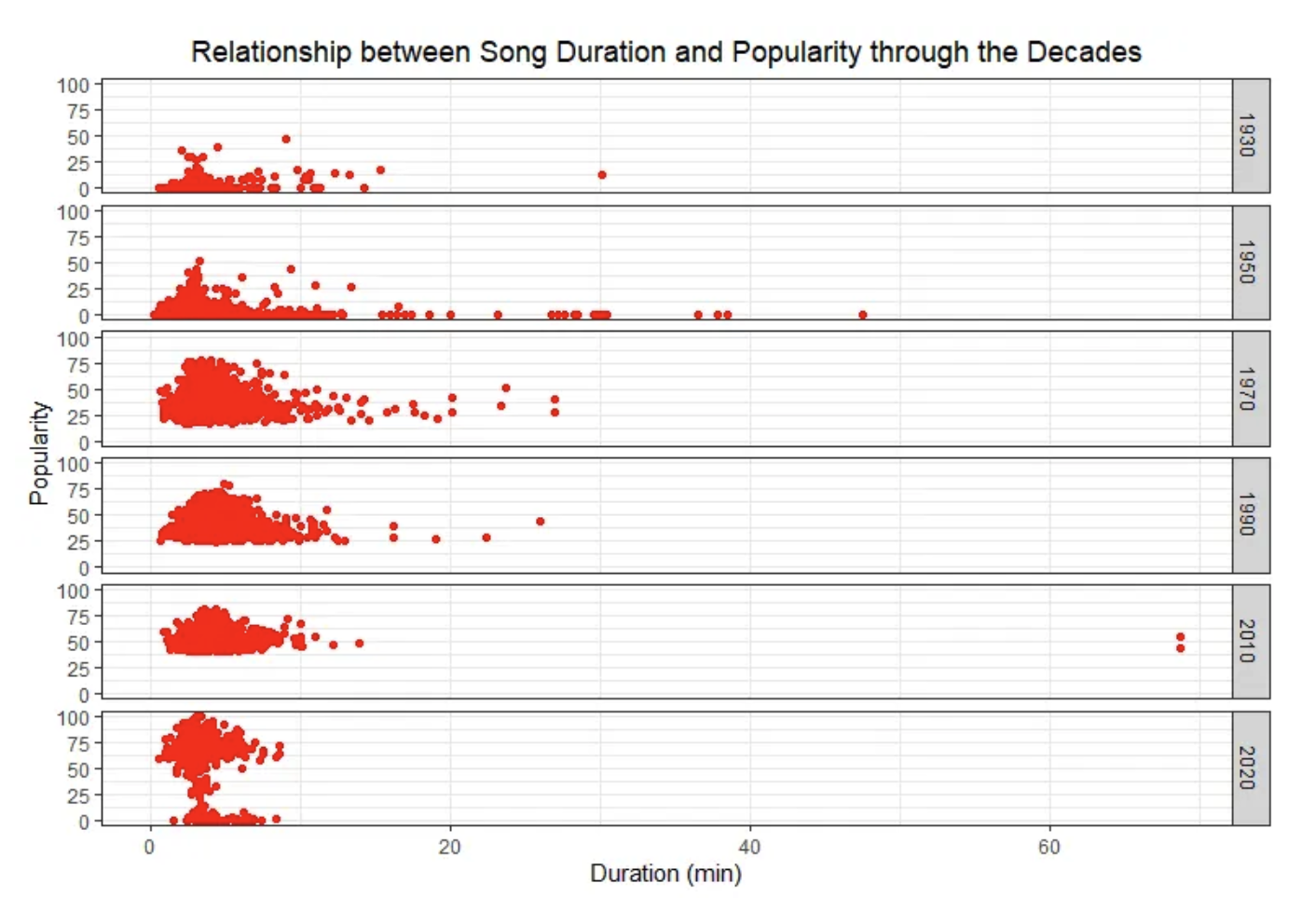

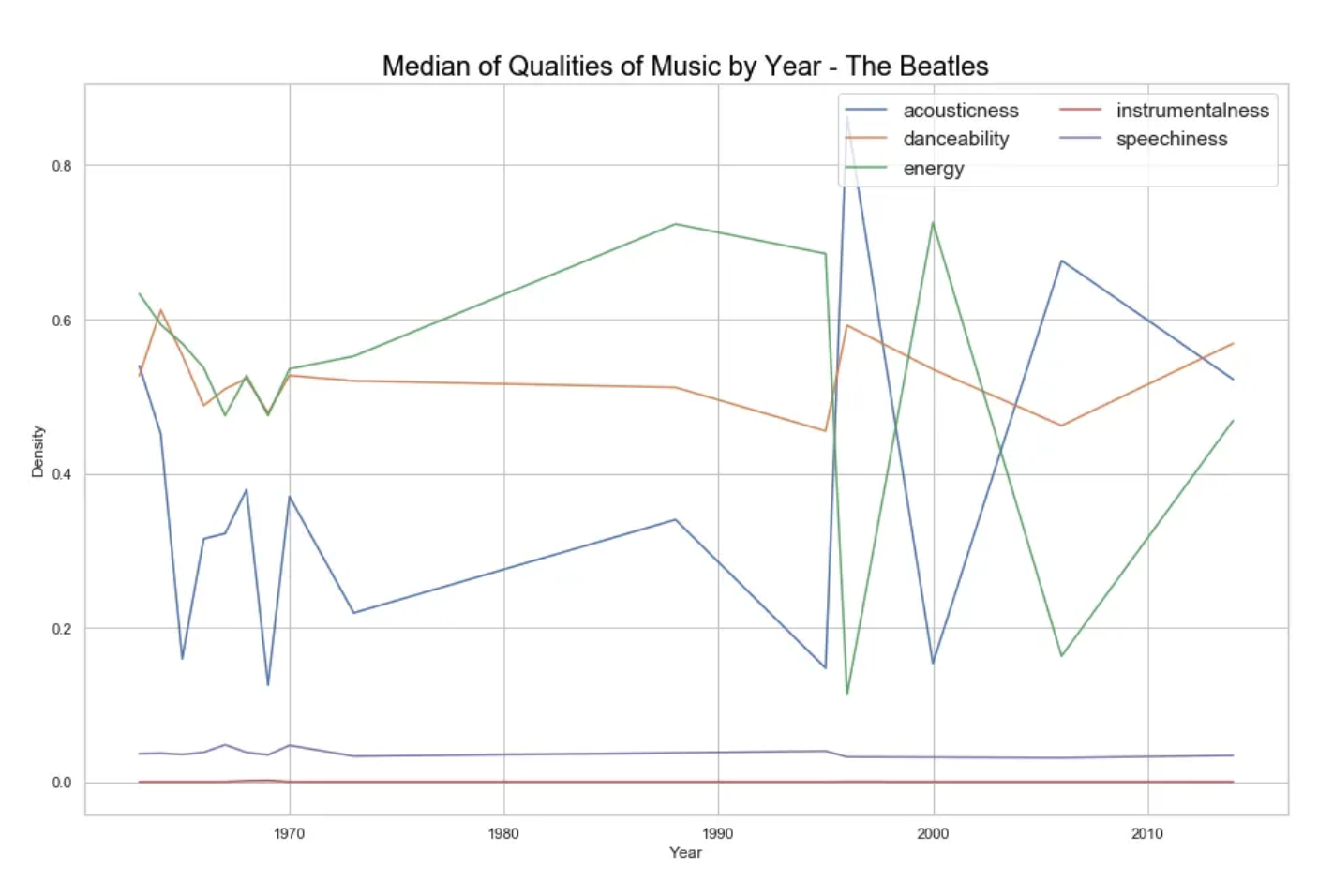

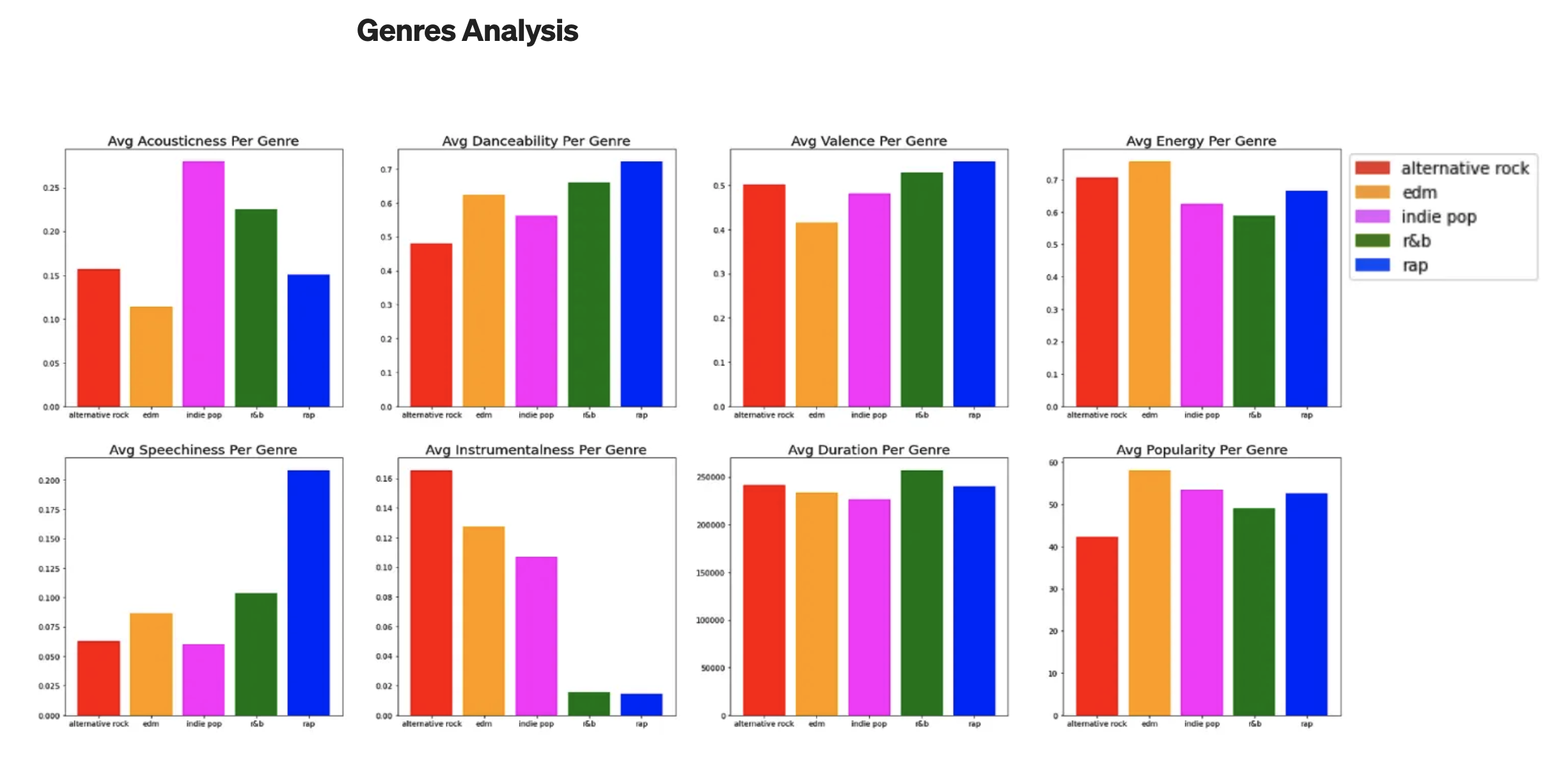

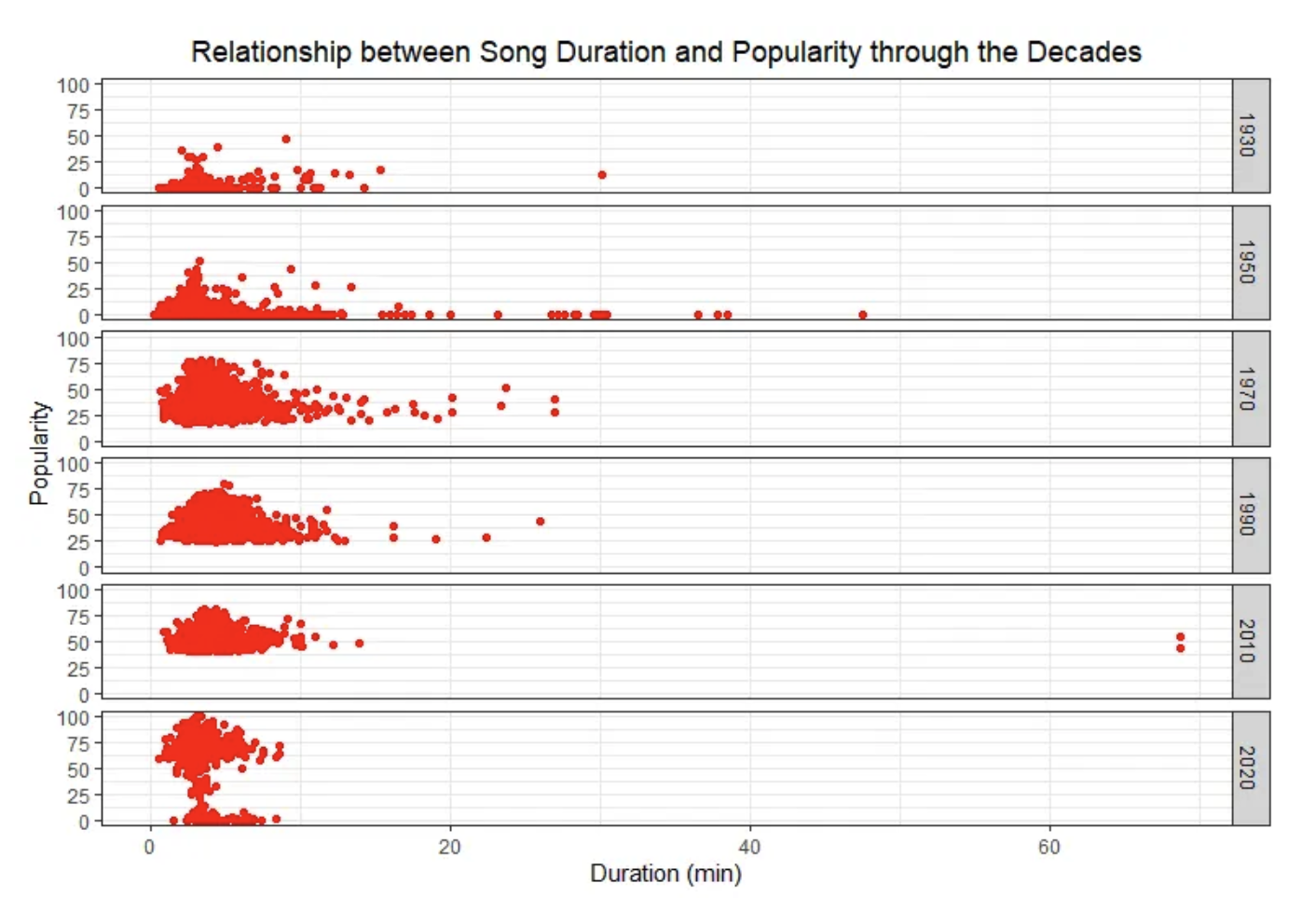

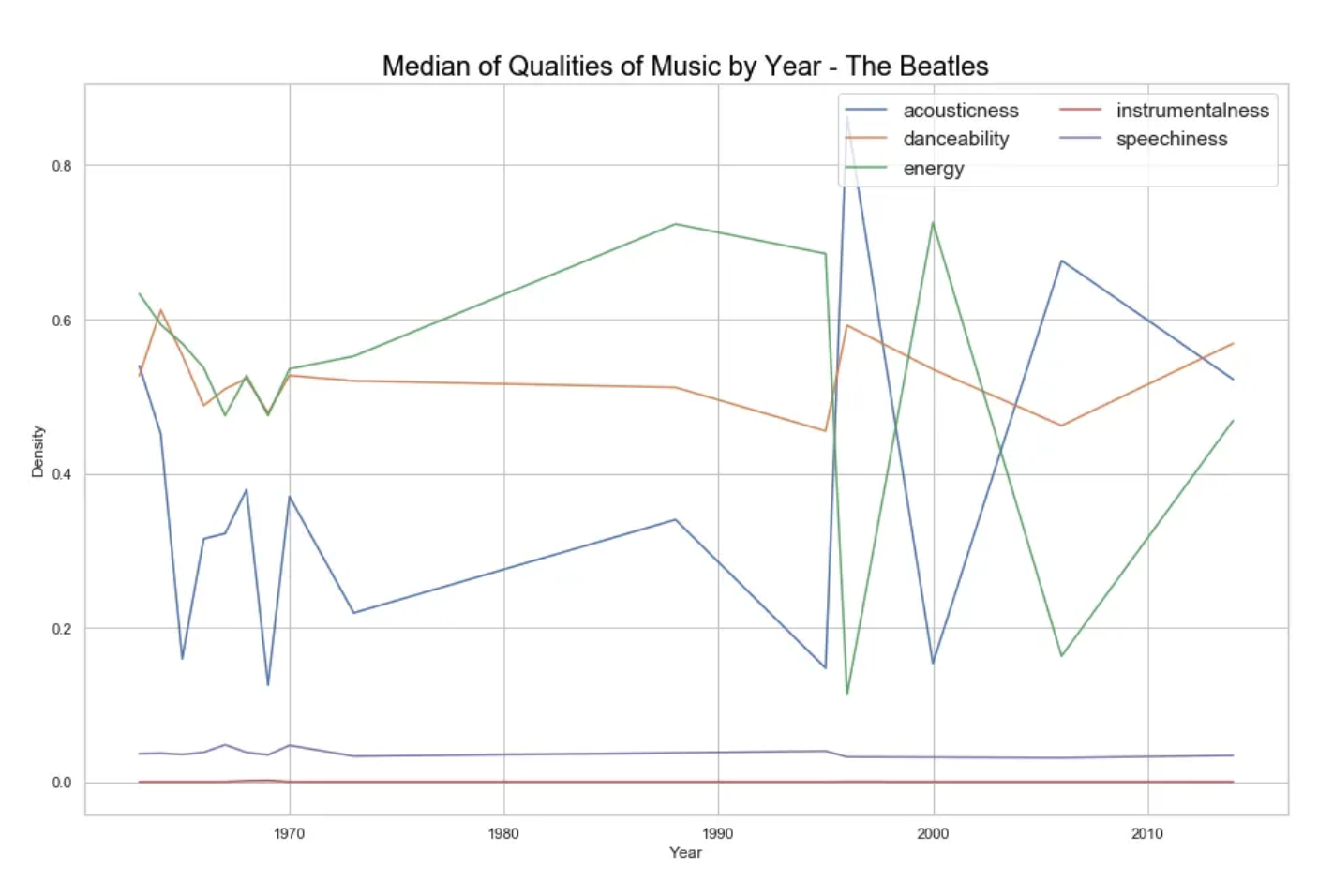

The raw data I focused on comes from a UCLA Report that analyzed 160k songs from Spotify and used the Spotipy Python tool to evaluate quantifiable metrics of music, such as acousticness, danceability, energy, duration, instrumentalness, valence, popularity, tempo, liveness, loudness, and speechiness. It is important to note that some of these, like popularity, are somwhat subjective to the metrics and algorithms that Spotify made.

I have built my own data sets using Spotipy in the past, and the data collection is simply a large spreadsheet. In my past research I have found it extremely difficult to find strong correlations in music data, as music is very subjective and volatile. However, it was interesting to see the findings and visualizations that the UCLA paper produced using a different approach. In the UCLA paper they provided various examples to visualize the information they collected in order for it to be interpretable:

Figure 1.2 - DataRes at UCLA.

Figure 1.2 - DataRes at UCLA.

Figure 1.3 - DataRes at UCLA.

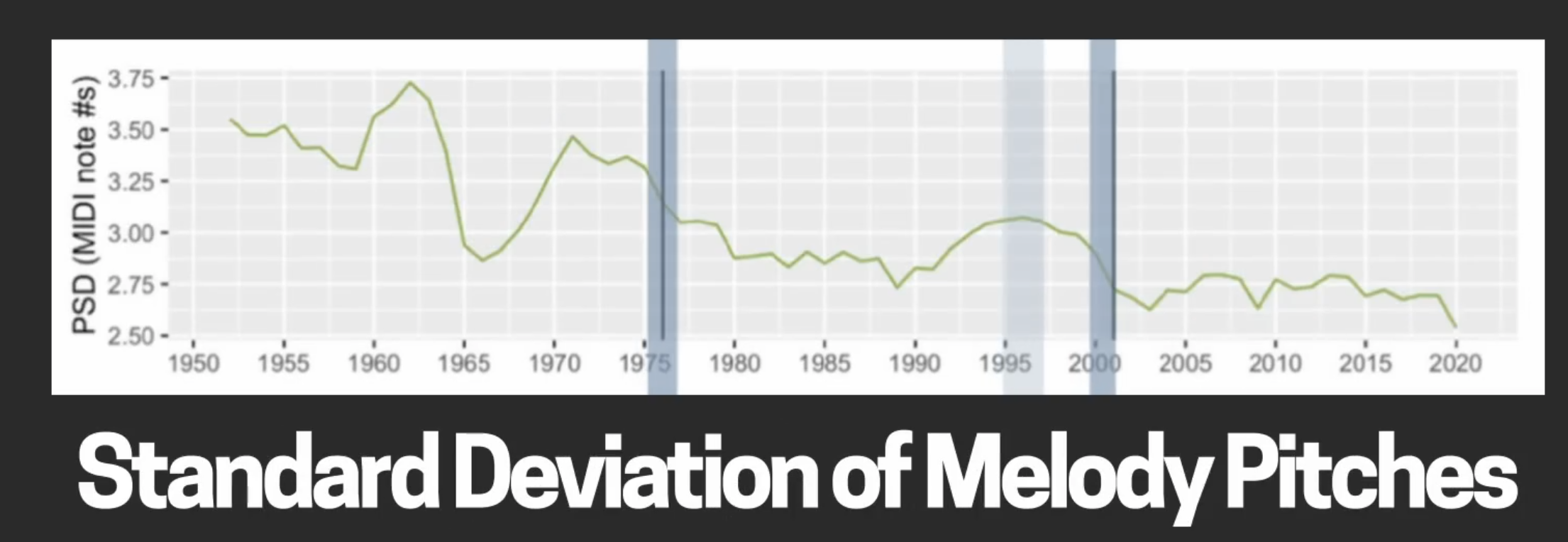

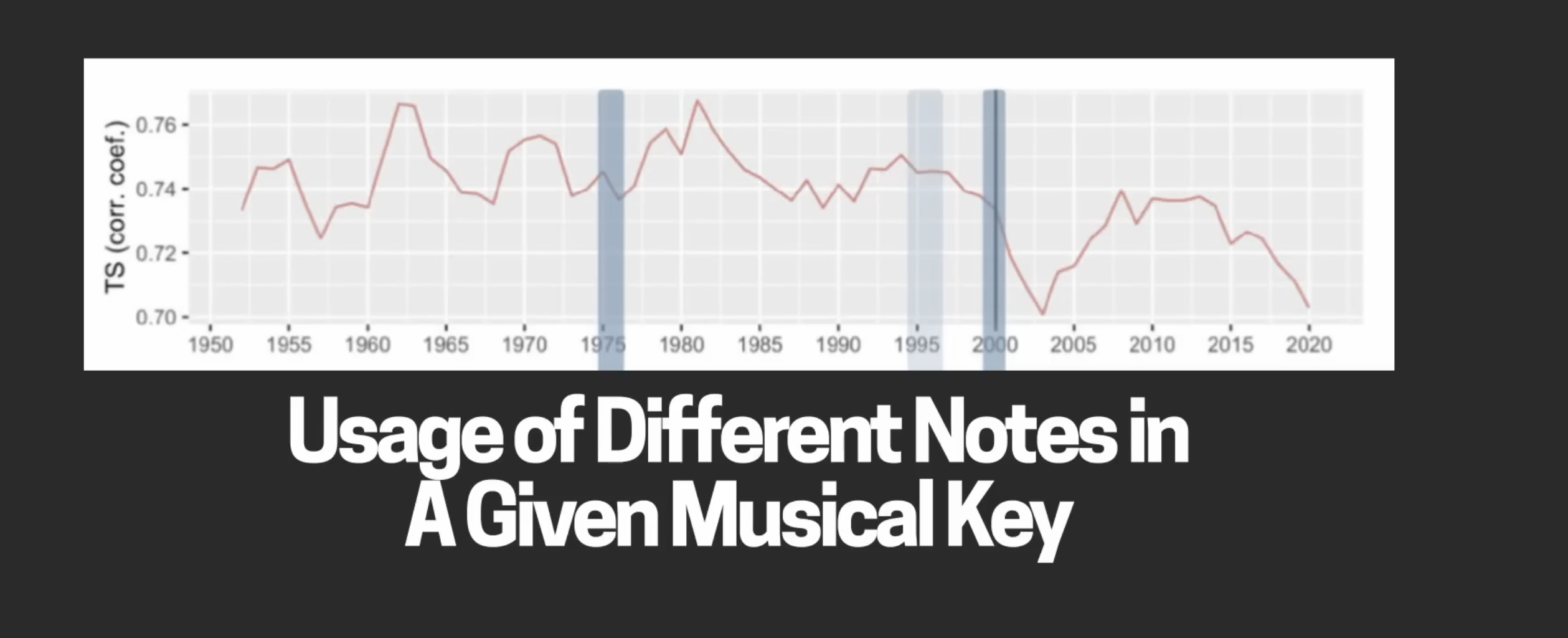

Another study from Queen Mary University of London evaluates simplification the changes in pop music melodies by looking and lyrics and musical variation in keys.

Figure 2.1 - Hamilton, M., Pearce, M.

Figure 2.2 - Hamilton, M., Pearce, M.

I worked on a data project using a dataset of electronic music to find trends over time and my results were similar; the truth about analyzing music with data is that it is practically impossible to find strong correlations. Music tends to be a reflection of culture, and culture is a reflection of communities of thousands or millions of people interacting.

The paper did make an observation regarding the overlap between lofi and hiphop tracks, and state that this relationship is “ most probably because lofi beats are actually sampled from these tracks.”

There are other music analysts out there that find trend to define popularity and have more expansive dataset but music as a whole is too volatile to predict. Another interesting insight is the use Classification algorithms to study behavioral patterns in music listening to then adjust and optimize user engagement. And example of this is how fast a song gets into a chorus, the absence of the musical bridge and other musical choices in popular music designed to hook younger people.

If the goal is to predict future trends then the study should be hyper-focused on specific sub-genres or even artists; let’s look at what worked for artist X and how we can implement that into future project. It would also be interesting to study musical trends against influential historical social movements to try understand the origin of new genres and influences.

I would love to have a platform for real-time song analysis. Instead of going through Spotify accounts, python and data visualizations, it would be great to have a platform where the user can drop a song and get instant insights such as: - Durability, most listened section (like youtube), visualization of loudness (like soundcloud). - Even use AI tools to split acoustic elements from vocals. - Import several songs or playlists and compare elements across them This could a fun tool to use and compare songs and artists, as well as useful one for musicians to look at trends in the industry.